Sometime near the beginning of 2019, the lab manager at my university came up to me and asked me “Can you make a thing with a Raspberry Pi that will record a 10-second buffer of images and save it to disk whenever a pulse comes down a trigger line?” I’m a Known Linux Geek and I spend an upsetting amount of time in that lab, so it’s not entirely out of left field that I’d get asked to do something like this. I said yes, because well, it didn’t sound that hard. I then spent the next four months learning the meaning of Scope Creep.

Some more details:

There’s a project to monitor a large population of penguins for various scientific endeavours

There’s no real internet on the island: No maintenance possible, no way to offload images taken

The device has to last through six months and potentially thousands of image captures about ten meters away from the ocean

Use a USB webcam for the cable length and image quality

Capture high-quality, 10-fps stills of 5 seconds before and 5 seconds after a penguin steps on a trigger plate

Store all information to a flash drive that can be easily removed

System should be plug-and-play: no deployment needed

The initial design wasn’t too rough. I’m not a good programmer, but I am a capable one, and I was pretty sure I’d be able to handle this one. I did, but hoo boy, did some stuff change.

I’m not a programmer. I’m studying an electrical engineering degree. I actually kinda wanted to be a microbiologist. Do not let the fact that I run Linux on everything, or that I spend about 10% of my day writing scripts, or the fact that this blog post was assembled by my own templating tool fool you, none of this is what I’m trying to do. I like Linux because it lets you do some truly awful munging together of different bits and pieces of things other people have written better than you ever could to produce something useful. Keep this in mind as I go through how this all works.

I bashed out the basic system in a couple hours. It’s written in python because I didn’t trust myself to do memory management for code that could potentially run for weeks at a time. In fact, a healthy distrust for my own ability could be the theme of this entire project. It’s pretty simple, it’s built on OpenCV which is a little heavy but is well supported for interfacing with video devices on the Pi3. The program spins up a threading.Thread to continuously buffer OpenCV image captures into a python collections.deque because a deque can be assigned a maximum length, turning it into a circular buffer. The thread has a few functions that flip some semaphores that tell the thread to stop and capture the current image buffer for the next few seconds, and then save that to disk. I wrote a state chart and everything.

The images are captured as stills rather than video since they need to be high-quality enough for computer vision work in the future. I wired up a basic command-line debugging interface to manually trigger captures, and used RPi.GPIO’s very useful GPIO Interrupt Callback feature to respond to inputs on the trigger line.

I scripted some careful abuse of mount(8) and the permissions system to mount the first external drive found to a predefined location. I did do the thing of enabling NOPASSWD sudo, which I figured would be fine since it was going on an island populated with nothing but penguins for six months with no internet connection. It’s as close to unhackable as I’m going to get in life.

I actually wrote a config file (which is really just a python file containing only variables) so this is a pretty flexible piece of software. Cool, time to hand this off and see how it runs.

Ah, here we go. First problem: The images are captured at 1280×720 pixels, which means reasonably large images, a meg or two each. There are at least 100, up to 200 images captured every time a penguin is detected, so we’d run out of space on any normal flash drive real fast. This is the first truly awful hack I used. The images are still captured as stills (numbered 1.jpg to 200.jpg), because otherwise the camera tends to blur motion a lot more. So, we capture stills, commit them to file, and then I use os.system() to call ffmpeg(1) with the following command:

ffmpeg -r 10 -f image2 -i /path/to/images/%d.jpg /path/to/output.mp4 2>/dev/null > /dev/nullThis takes the contents of the folder, in order, and converts it to an mp4 at 10fps. Another call is used to rm *.jpg in the same directory as soon as the video is done. I also added a cronjob that uses goes through and deletes any jpegs left over in the video storage directories at midnight, just in case they start to build up. Sure would be cool if I could be certain the system would keep time, but either way this should be reliable enough.

At this point everything is fine. The system reliably captures images, burns some CPU churning out a video, and wipes the old stuff. The systemd services restart when power is lost, and the cronjob will clear out any mistakes. So now we get the next request: two cameras!

I, at this point, do not want to try and write a system that will dynamically handle up to N cameras plugged into the raspberry pi. I add some parameters to the __init__ function for the thread that let you select which camera you’ll be using (OpenCV creates a capture device with VideoCapture(N) where N is 0 for the first camera, 1 for the next and so on) and so now I have two capture threads. I duplicate some code, including the images deque and the function calls that tell each thread to capture. Set everything running on my laptop, works fine! Let’s copy it to the Pi3 and oh no, we’ve made a mistake.

The capture works fine… so long as I record at 640×480 pixels. I spend a few days bashing my head against this, and I realise that because the RPi3 shares a single slow USB controller between all four USB ports AND the ethernet port, I have a serious bandwidth bottleneck. In addition, high resolution images take up a lot of space in the memory deque, so I wouldn’t be able to record for as long. Ultimately I decide that this isn’t worth fixing: I can either take two shorter, low-res streams, or one longer, high-res stream. This is fine. Incidentally, the Raspberry Pi 4, with a dedicated USB-3 controller and separate gigabit ethernet controller (as well as much more memory) would have solved this problem handily, but it wasn’t announced until long after I was finished.

I’m now more or less done. I can flip between single or double camera inputs and configure them as needed. I spent a few hours in the lab after I finished my first three exams and showed it to the lab manager, who said it was good. I spent a little while getting it set up, we settled on only using one camera because resolution was more important than multiple angles. The whole thing is due to ship out a few days after my last exam, so I go in after my exam to wire my system in and get it set up, which takes maybe an hour. I spend a little while making sure it all fits inside the sealed lunchboxes we’re using to prevent the ocean from immediately decimating everything.

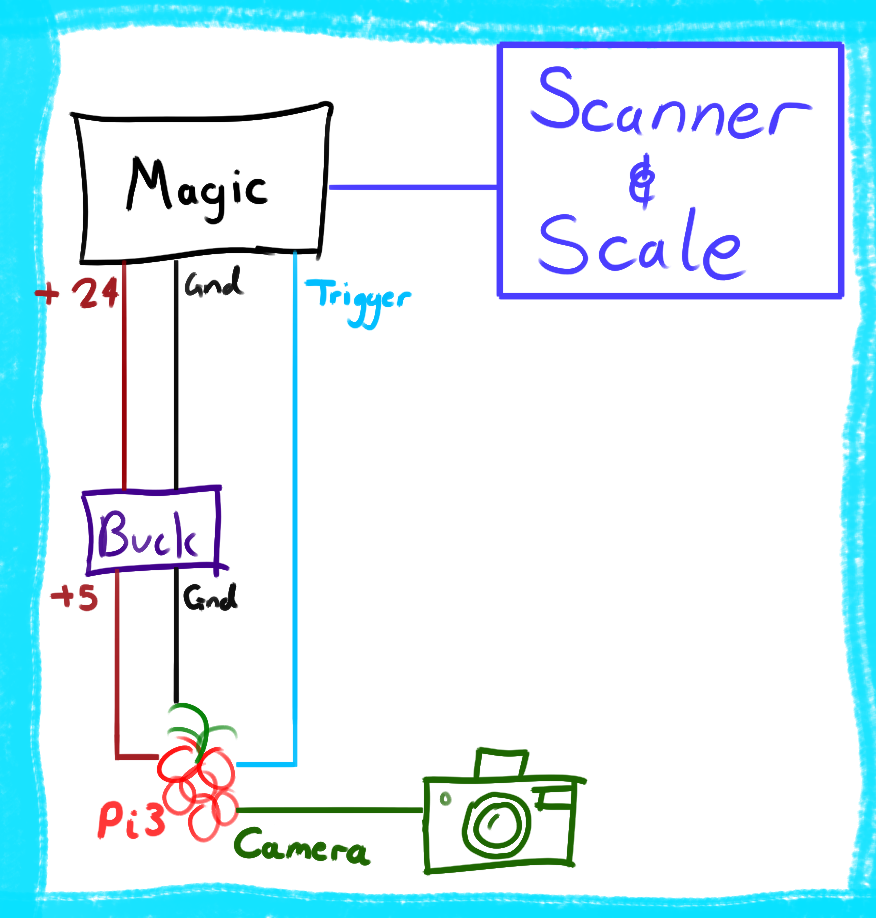

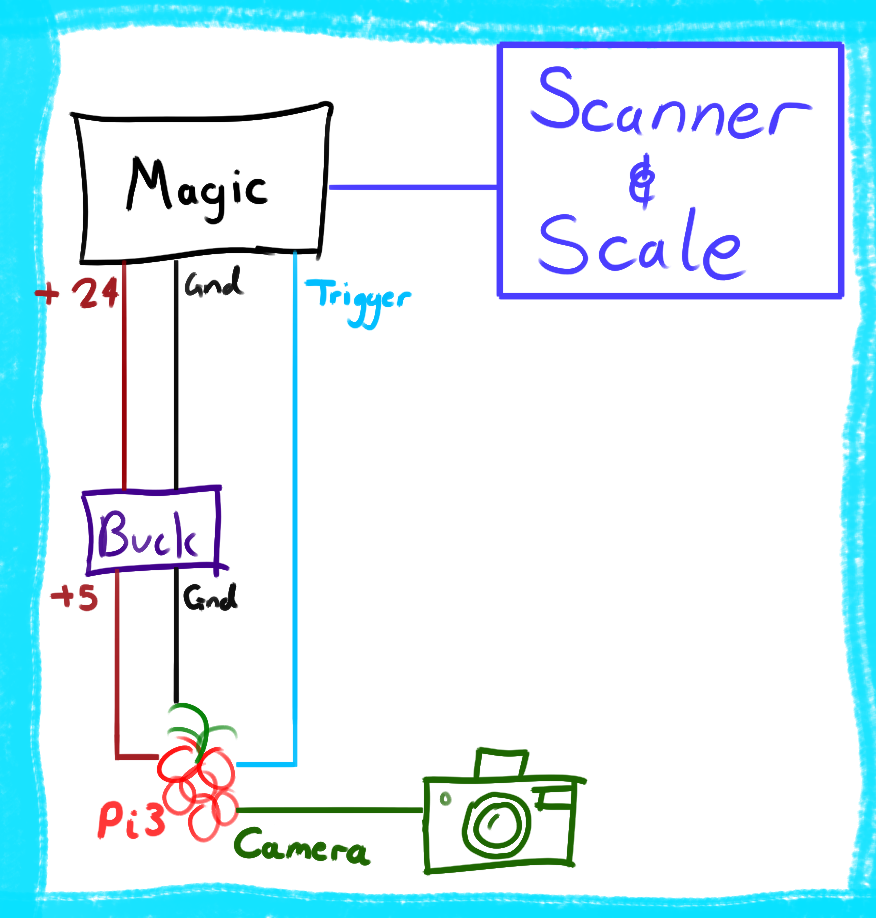

At this point it might be beneficial to understand the topology of the system as I understood it:

There’s Some Magic that supplies unlimited +24V plus ground power, and a trigger signal. I have a buck converter that gives me the +5V I need to run my stuff. I take pictures with the camera, and save them when I get a pulse down the trigger line. The magic knows to send me a trigger because it is connected to the scale and scanner, which can detect a penguin walking past. I just have to set up the wire that carries 24V, Ground and Trigger. I’ve documented all of this, so the cables are all made when I get there. I wire everything up, do some checks and see that it’s all working. I think I’m about done.

At 1800 that same day, I get a message from the lab manager: “I may have a significant problem with my design and you may be able to solve it.”

Oh no.

The Magic noted above is a simple ARM dev board wired up to various other systems, and was made by the lab manager. It handles all sorts of things, it reads from the scanner and the scale, it corrects for sensor drift, it sends logging metadata to the web over a GSM modem, it manages the two car batteries providing power for the whole thing, and it sends the trigger message to my little device. One small problem. For whatever reason, the USB serial connection the RFID reader uses is not playing nice with the devboard. Now, if we had time, we could debug it and get it working and I’d be fine. We do not have time, we have two days.

He suggests that I use the Pi3’s USB port to read in from the RFID reader and push that information out over the Pi3’s UART lines, which he knows he can read perfectly. It’s at this point that the awful Linux gremlin who lives in my brain rears their head.

Linux represents almost everything as a file. A serial port, such as the kind a USB serial device would present, shows up as something like /dev/ttyUSB0. If you’ve got this configured correctly, you can read and write to the serial port by just treating it like a normal file.

So I enable UART serial in raspi-config and figure out how to use stty(1), a tool which configures TTY’s, and I write this shell script:

#! /bin/bash

# Configure both as 115200 baud TTY's

stty /dev/ttyAMA0 115200

stty /dev/ttyACM0 115200

cat /dev/ttyACM0 > /dev/ttyAMA0Unnecessary use of cat(1), I know, don’t worry, it gets worse.

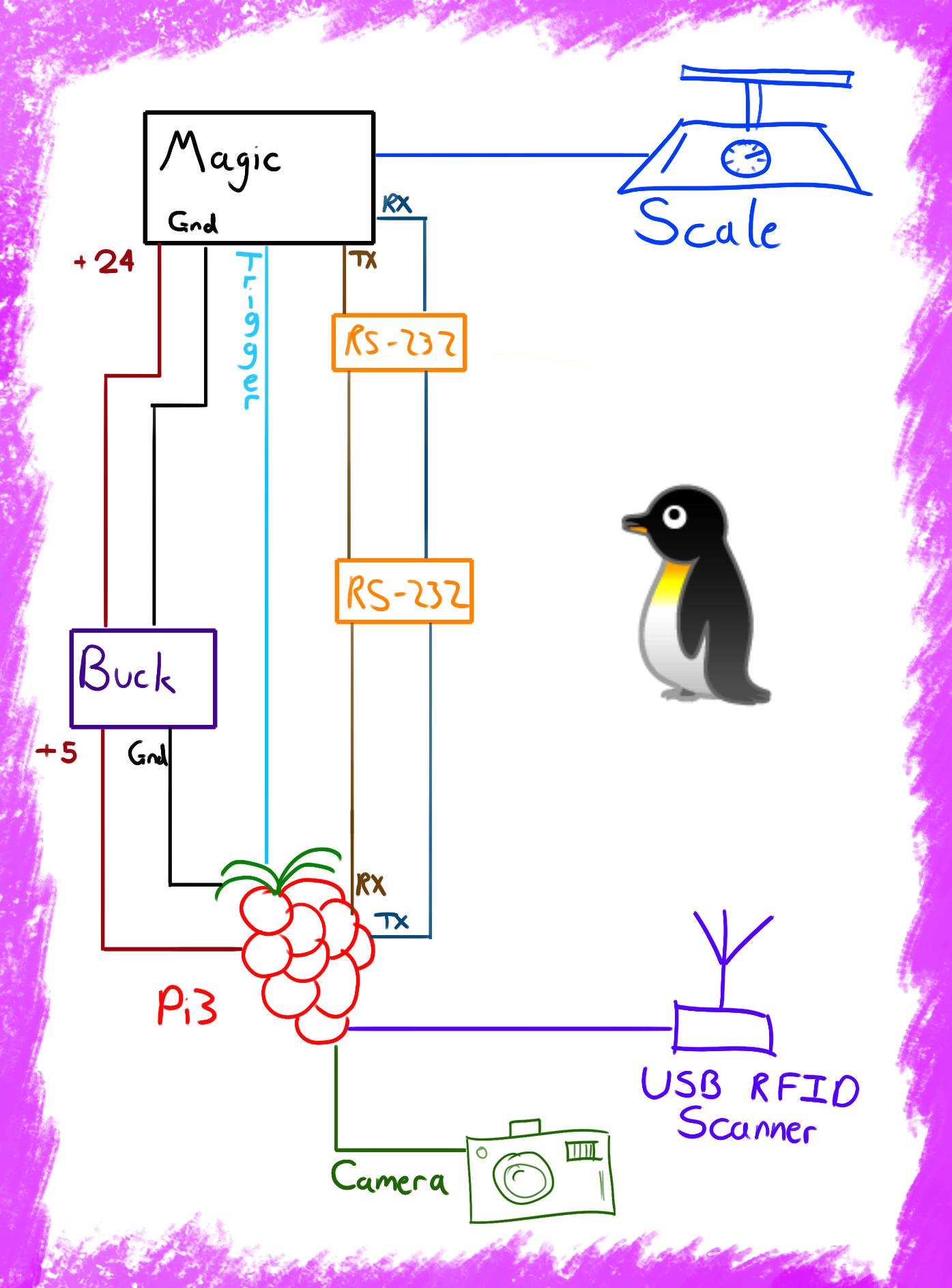

This runs on boot, which means it’ll relay the incoming serial from ttyACM0, which is the RFID reader, to ttyAMA0, the Pi3’s UART device. I’m at home, so I emulate this using an Arduino Uno acting as a USB Serial Device and a knock-off Saleae Logic Analyzer to read the pins. So now, we add two new wires to the setup, which carry TX and RX between the Pi3 and the Magic (which is getting less Magical by the second). Admittedly, nothing ever flows from Magic to the Pi3, and even if it could, the Pi3 doesn’t have any logic to respond to it.

Great. Cool, it all works, I’ll just go in tomorrow morning, add the new wiring, shuffle some pins around and hand it off.

This actually goes off without too much trouble. I spend most of Sunday stripping and crimping wires and making sure I match the red line to the red line. I also add in an RS-232 voltage-shifting board to reduce the risk of the wimpy 3v3 UART signal being lost as it goes over the line. If you want a sense of what a mess this is, here’s a tweet. Yes, that’s a screw-terminal connected to jumper wires. I was having an extremely Normal Day.

I eventually get everything wired up in the right order, as well as tossing a tee(1) (incidentally allowing me to drop cat(1) and just use the file redirector) into the last line of that script so that it can log the incoming RFID detections to a file, just as a backup.

At this point the system looks like this:

So, we’re done. The system ships out tomorrow. It’s 2000 at night. I got a free lunch out of this. Well done team, case closed.

I get a message at 2055. There’s concerns that because we’re now sending the 3V “Take Picture” signal down the line with UART next to it, there might be noise. Plus, since the Pi3 is already in the middle of everything, it could really just trigger whenever it sees the RFID scanner pick something up. It’d be a lot more independent this way, too. The video still gets taken if the signal comes in, but this way we have a lot more data.

Here’s where I started going a little nuts. See, I can do all of this. I ended up doing all of this, it works but remember the original problem? Part of the reason I was confident I could do this was partly because, at worst, my part fails and nothing too bad happens. NOW, however, my section is squarely in the thick of things. If my code goes down, it takes down the entire RFID scanning system, which would be, uh, less than ideal.

Oh, and the system will be shipped out tomorrow morning. I can’t test on the system. I’m writing this add-on blind.

I rebuild my little testing jig: An Arduino Uno to simulate the RFID reader, a logic analyzer to read the UART-out.

I decide to bundle all the functionality into the python script handling the cameras. In order to do this, I grab a program called ttylog off sourceforge. ttylog is supposed to be for logging a tty to a file, which means it prints to stdout. I use it to feed the incoming stream from the RFID reader through a tee(1) and into my python script. The python script reads the incoming tag information, determines if it’s a real tag or just noise with a regex, and if it’s a valid tag, fires the “take picture” function as well as writing just the tag number to the outgoing TTY file. The line that runs this is as follows:

ttylog --baud 115200 --device /dev/ttyAMA0 --flush | tee /path/to/logfile.txt | python3 tuxcap.pyA few modifications to the code mean that now if an image is set off by serial, it gets saved along with the ID that set it off, for future Machine Learning purposes. I’ll upload my code when I uh, get the most recent form back from the lab manager. This was not a normal development cycle.

I write this all up, email it off to the lab manager (who is onsite, and heading out to the island to install it the next morning). That morning, I end up TeamViewer’ing into his laptop to do final checks, testing it with whatever slightly-more-valid data he could get his hands on and making sure the serial relay works. After a couple hours, I’ve resolved some weird serial bugs and got a working version. I finish up, write some notes for him to help him implement the last bits of his microcontroller that still needs work, and hand off. I’m alert through the next morning in case I need to help with the actual on-site installation, but fortunately that call never comes.

So that’s the story of how I, K. Random Undergrad, ended up building a vital part of like three separate research projects. I got this story and half of my degree-required engineering experience work. The goalposts moved a lot, but meeting moving goalposts seems to be a big part of developing anything involving software.

If it breaks, well, not much I can do about that. Even if my code survives, it’s possible that the lens gets messed up, or saltwater invades the camera, or ants burrow through the signal lines. Hopefully that won’t happen. I really hope that doesn’t happen, I’d feel pretty bad if I knew my code ruined that much science all at once. In a few months we’ll recover the disk from the island, and if it’s got enough penguin photos on it, I might take on the task of building an AI that can identify penguins on sight.

I seriously need to get better at writing cohesive software. This thing is a sprawling mess

Sprawling messes can still work if you know them well

The “Everything Is A File” idea is wonderful and cool if you know what you’re doing, but it’s also horrifying

USB ports have bandwidth limits. This goes double for weird embedded USB ports

The Raspberry Pi is not particularly cheap, and not particularly good, but it is very versatile

Given enough motivation, anyone can write software that can survive a good hard fall down the stairs

I’m pretty proud of this. It’s the first Real Actual Science I’ve really worked on, and it’s a heck of a lot more engaging than yet another toy project for a university course. The final state of the code is currently somewhere on the lab manager’s laptop, and I’ll be sure to get hold of it to put it online.

If you feel like bits and pieces of this system would fulfil some need you have, let me know and I’ll direct you to the appropriate version in my git history where it wasn’t crazy levels of custom yet and could still potentially be used for other applications.